Our feelings are private – or at least they have been. Recent breakthroughs in neuroscience and artificial intelligence are shifting this belief, while at the same time inviting new concerns regarding ethics, anonymity, and the horizons of brain/computer interaction. If new research is to be believed, you might find yourself coming home from work one day in a rotten mood—just to have your smart speaker check your feelings immediately and start playing calming music.

A novel artificial intelligence (AI) approach based on wireless signals could help to reveal our inner emotions, according to new research from Queen Mary University of London.

This is one of the applications of a new neural network that engineers at Queen Mary University of London have taught how to instantly perceive such human emotions—by blowing people with radio waves and picking up emotional signals like changes in their heartbeat. The algorithm can detect feelings like anxiety, disgust, excitement, and relaxation with 71 percent accuracy, according to research published earlier this month in PLOS One. It’s far from ideal, but it’s impressive enough to be able to find any real-world application in our lives.

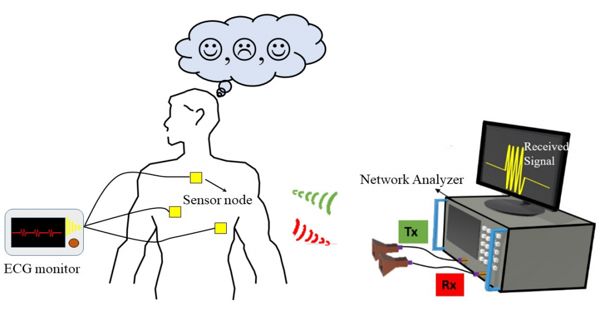

Participants were originally requested to watch a video chosen by participants for their ability to elicit one of four basic forms of emotions: rage, sorrow, excitement and enjoyment. As the individual observed the video, the researchers then released innocuous radio signals, such as those sent by every wireless device, like radar or WiFi, to the individual and analyzed the signals that reflected back from them. Through observing the changes in these signals triggered by subtle body movements, researchers were able to discover information about the individual’s heart rate and the rate of breathing.

The team led by Yang Hao, Dean of Research at the Faculty of Science and Engineering, used a small transmission antenna to bounce radio waves off subjects. They used the signals to assemble a collection of various heart rhythms as they watched emotionally charged videos that triggered relief, anxiety, disgust, and joy. The team also linked the subjects to an electrocardiogram to ensure that the signals that the antenna was gathering were accurate. The team ran their data through a deep neural network and found that their system correctly identified the emotional status of the participants 71% of the time.

The study team aims to explore societal acceptance and ethical questions regarding the application of this technology. Such issues would not be unexpected and would conjure up a rather Orwellian image of the ‘thinking police’ of 1984. In this book, thought police watchers are specialists in reading people’s faces to ferret out state-sanctioned views, even though they never perfected understanding just what a person was thinking.

Black Hat

The algorithm is qualified to detect variations in the heartbeat of a human, as observed by radio waves, and interprets them as real emotions, according to Protection One. Unsurprisingly, the military-focused publication was interested in whether the device could be used in an interrogative environment, but the lead author and Queen Mary engineer Yang Hao said that was not the point.

“As for its implications for… national security, more research needs to be done, just like other ethical issues and the responsible use of this technology,” Hao said to Defense One.

Learning Curve

Of course, 71 percent accuracy is not optimal, but analysis indicates that the neural network greatly outperforms other, less advanced AI architectures.

For example, according to the report, a more conventional machine learning algorithm had only guessed about 40 percent of the time. So while we don’t have computers that grasp the complex, emotional experience of human feelings, at least we’re moving closer to resources to help us decipher them.

Detecting emotions

Traditionally, emotional identification has focused on the determination of observable signs such as facial expressions, speech, body motions or eye movements. However, these approaches can be ineffective since they do not adequately capture the individual’s internal feelings, and researchers are constantly searching for ‘invisible’ cues, such as ECG, to explain emotions.

The ECG signals sense electrical activity in the heart, providing a connection between the nervous system and the rhythm of the heart. However, measurements of these signals have largely been conducted using electrodes that are mounted on the body, and lately researchers have been looking at non-invasive techniques that use radio waves to monitor emotions instead.