One of the proposed applications for NeckFace, one of the first necklace-type wearable sensing technologies, is tracking facial movements—and possibly their cause. NeckFace was developed by a team led by Cheng Zhang, assistant professor of information science at Cornell’s Ann S. Bowers College of Computing and Information Science. It can continuously track full facial expressions by using infrared cameras to capture images of the chin and face from beneath the neck.

Researchers in the United States have created a smart necklace that can track full facial expressions without the use of a frontal camera. Cornell University researchers created NeckFace, one of the first necklace-style wearable sensing technologies. Their findings are detailed in the paper “NeckFace: Continuously Tracking Full Facial Expressions on Neck-mounted Wearables,” which was published on June 24 in the Proceedings of the ACM on Interactive, Mobile, Wearable, and Ubiquitous Technologies.

Tuochao Chen (Peking University) and Yaxuan Li (McGill University), visiting students in the Smart Computer Interfaces for Future Interactions (SciFi) Lab, as well as Cornell MPS student Songyun Tao, are co-lead authors. Other authors include Cornell Ph.D. students in information science HyunChul Lim, Mose Sakashita, and Ruidong Zhang, as well as François Guimbretière, professor of information science at Cornell Bowers College.

Human facial movements convey emotions and help us communicate nonverbally and perform physical activities, such as eating and drinking.

NeckFace is the next generation of Zhang’s previous work, which resulted in C-Face, a similar device but in the form of a headset. NeckFace, according to Zhang, offers significant improvements in performance and privacy, as well as the option of a less-obtrusive neck-mounted device.

Zhang sees many applications for this technology, in addition to potential emotion tracking: virtual conferencing when a front-facing camera is not available; facial expression detection in virtual reality scenarios; and silent speech recognition.

“The ultimate goal is for the user to be able to track their own behaviors through continuous tracking of facial movements,” said Zhang, the SciFi Lab’s principal investigator. “And hopefully, this will provide us with a wealth of information about your physical and mental activities.”

NeckFace, according to Guimbretière, has the potential to revolutionize video conferencing.

“The user would not have to be careful to remain in the field of view of a camera,” he explained. “Instead, NeckFace can recreate the perfect headshot as we move around in a classroom or even outside to walk with a distant friend.”

Zhang and his colleagues conducted a user study with 13 participants to test the effectiveness of NeckFace. Each participant was asked to perform eight facial expressions while sitting and eight more while walking. In the sitting scenarios, participants were also asked to rotate their heads while making facial expressions and to remove and remount the device in a single session.

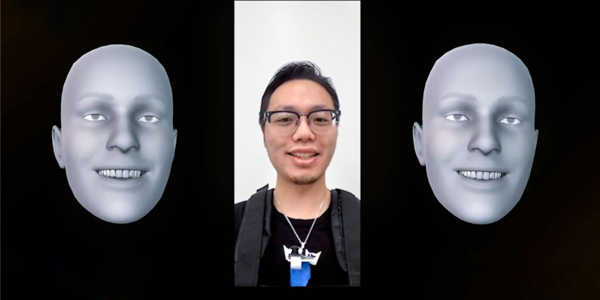

NeckFace was tested in two ways: as a neckband draped around the back of the neck with twin cameras just below the collarbone level, and as a necklace with a pendant-like infrared (IR) camera device hanging below the neck.

The researchers compared baseline facial movement data collected with NeckFace to data collected with the TrueDepth 3D camera on an iPhone X. Participants in the study expressed 52 different facial shapes while sitting, walking, and remounting.

Using deep learning calculations, the team discovered that NeckFace detected facial movement with nearly the same accuracy as direct measurements taken with the phone camera. The neckband was found to be more accurate than the necklace, possibly because the neckband’s two cameras could capture more information from both sides than the necklace’s center-mounted camera.

When optimized, Zhang believes the device could be especially useful in the mental health field, tracking people’s emotions throughout the day. While people don’t always show their emotions on their faces, the amount of facial expression change over time may indicate emotional swings, according to him.

“Can we actually see how your emotions change throughout the day?” he wondered. “We could have a database on how you’re doing physically and mentally throughout the day with this technology, which means you could track your own behaviors. A doctor could also use the information to back up a decision.”