A new method for correcting errors in quantum computer calculations could remove a major impediment to a powerful new realm of computing. Researchers have discovered a new method for correcting errors in quantum computer calculations, potentially removing a major impediment to a powerful new realm of computing.

Error correction is a well-developed field in traditional computers. To send and receive data over choppy airwaves, every cellphone requires checks and fixes. Quantum computers have enormous potential to solve complex problems that conventional computers cannot, but this power is dependent on harnessing the extremely fleeting behaviors of subatomic particles. These computing behaviors are so transient that even inspecting them for errors can cause the entire system to crash.

An interdisciplinary team led by Jeff Thompson, an associate professor of electrical and computer engineering at Princeton, and collaborators Yue Wu and Shruti Puri at Yale University and Shimon Kolkowitz at the University of Wisconsin-Madison, demonstrated in a theoretical paper published in Nature Communications that they could dramatically improve a quantum computer’s tolerance for faults and reduce the amount of redundant information needed to isolate and fix errors. The new technique quadruples the acceptable error rate, from 1% to 4%, making it practical for quantum computers in development.

“The fundamental challenge to quantum computers is that the operations you want to do are noisy,” Thompson explained, implying that calculations are susceptible to a variety of failure modes.

We see this project as laying out a kind of architecture that could be applied in a variety of ways, adding that other groups have already begun engineering their systems to convert errors into erasures. There is already a lot of interest in finding adaptations for this work.

Jeff Thompson

In a conventional computer, an error can be as simple as a bit of memory accidentally flipping from a 1 to a 0, or as messy as one wireless router interfering with another. A common approach for handling such faults is to build in some redundancy, so that each piece of data is compared with duplicate copies. However, that approach increases the amount of data needed and creates more possibilities for errors. Therefore, it only works when the vast majority of information is already correct. Otherwise, checking wrong data against wrong data leads deeper into a pit of error.

“If your baseline error rate is too high, redundancy is a bad strategy,” Thompson said. “Getting below that threshold is the main challenge.”

Rather than focusing solely on reducing the number of errors, Thompson’s team essentially increased the visibility of errors. The team dug deep into the actual physical causes of error and designed their system so that the most common source of error effectively eliminates the damaged data rather than simply corrupting it. This behavior, according to Thompson, represents a type of error known as a “erasure error,” which is fundamentally easier to weed out than data that is corrupted but still looks like all the other data.

In a conventional computer, if a packet of supposedly redundant data arrives as 11001, it may be dangerous to assume that the slightly more prevalent 1s are correct and the 0s are incorrect. But if the information comes across as 11XX1, where the corrupted bits are evident, the case is more compelling.

“These erasure errors are vastly easier to correct because you know where they are,” Thompson said. “They can be excluded from the majority vote. That is a huge advantage.”

Erasure errors are well understood in conventional computing, but researchers had not previously considered trying to engineer quantum computers to convert errors into erasures, Thompson said.

As a practical matter, their proposed system could withstand an error rate of 4.1%, which Thompson said is well within the realm of possibility for current quantum computers. In previous systems, the state-of-the-art error correction could handle less than 1% error, which Thompson said is at the edge of the capability of any current quantum system with a large number of qubits.

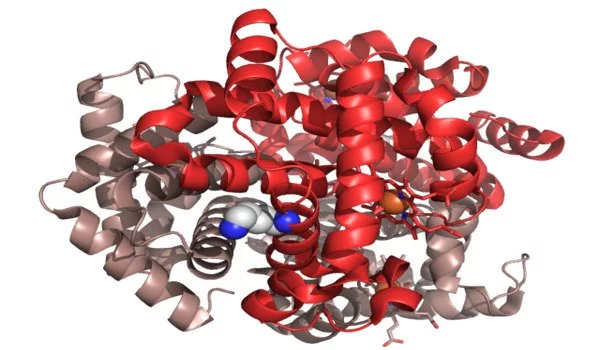

The ability of the team to generate erasure errors turned out to be an unanticipated benefit of a decision Thompson made years ago. His research focuses on “neutral atom qubits,” which involve storing quantum information (a “qubit”) in a single atom. They were the first to use the element ytterbium for this purpose. Thompson explained that the group chose ytterbium in part because it has two electrons in its outermost layer of electrons, whereas most other neutral atom qubits only have one.

“I think of it as a Swiss army knife, and this ytterbium is the bigger, fatter Swiss army knife,” Thompson said. “That extra bit of complexity you get from having two electrons gives you a lot of unique tools.”

One of those extra tools proved useful for error elimination. The researchers proposed pumping electrons in ytterbium from their stable “ground state” to excited states known as “metastable states,” which can be long-lived under the right conditions but are inherently fragile. Surprisingly, the researchers propose using these states to encode quantum information.

“It’s like the electrons are on a tightrope,” Thompson said. And the system is engineered so that the same factors that cause error also cause the electrons to fall off the tightrope. As a bonus, once they fall to the ground state, the electrons scatter light in a very visible way, so shining a light on a collection of ytterbium qubits causes only the faulty ones to light up. Those that light up should be written off as errors.

This breakthrough required combining insights from quantum computing hardware and quantum error correction theory, leveraging the interdisciplinary nature of the research team and their close collaboration. While the mechanics of this setup are specific to Thompson’s ytterbium atoms, he said that engineering quantum qubits to generate erasure errors could be a useful goal in other systems (of which there are many in development around the world) and is something the group is continuing to work on.

“We see this project as laying out a kind of architecture that could be applied in a variety of ways,” Thompson explained, adding that other groups have already begun engineering their systems to convert errors into erasures. “There is already a lot of interest in finding adaptations for this work.”

Thompson’s group is now working on demonstrating the conversion of errors to erasures in a small working quantum computer with tens of qubits.