Eight years ago, a woman with ALS, often known as Lou Gehrig’s illness, which results in gradual paralysis, lost her ability to speak. She can still make sounds, but her words are now incomprehensible, so she must use an iPad or a writing board to communicate.

After agreeing to have a brain implant, the woman is now able to speak at a speed that is akin to normal speaking, using words like “I don’t own my home” and “It’s just tough.”

This is the assertion made in research that a team from Stanford University released over the weekend on the website bioRxiv. Other researchers have not formally reviewed the study. According to the researchers, their volunteer, known only as “subject T12,” broke prior records by utilizing the brain-reading implant to speak at 62 words per minute, three times faster than the previous record.

Unrelated to the project, University of California, San Francisco researcher Philip Sabes hailed the findings as a “major breakthrough” and predicted that experimental brain-reading technology may soon be ready to leave the lab and enter the market as a helpful tool.

If the device were ready, “the performance in this study is already at a level that many people who cannot talk would want,” claims Sabes. “People will desire this,”

Those who do not have speech impairments typically speak at a rate of 160 words per minute. Speech is still the quickest method of human-to-human communication, even in the age of keyboards, thumb typing, emojis, and internet acronyms.

The latest study was conducted at Stanford University. The preprint, which was released on January 21, started receiving more attention on Twitter and other social media since Krishna Shenoy, the preprint’s co-lead author, passed away from pancreatic cancer the same day.

Shenoy has spent his professional life to accelerating brain interface communication, meticulously keeping track of his findings on the lab website. As we reported in the special computing issue of the MIT Technology Review, another volunteer Shenoy worked with in 2019 was able to utilize his thoughts to type at a pace of 18 words per minute, a record achievement at the time.

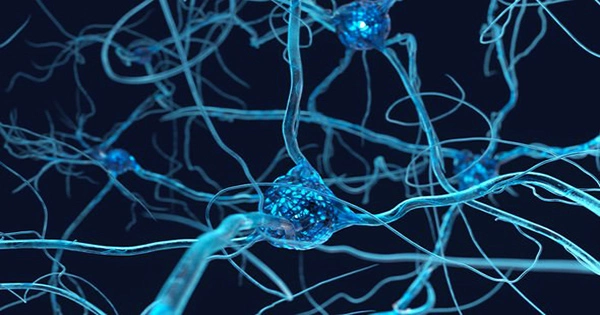

The motor cortex, the part of the brain that controls movement most significantly, is where Shenoy’s team’s brain-computer interfaces are placed. As a result, even if a person is paralyzed, researchers can record activity from a few dozen neurons at once and look for patterns that correspond to the movements they believe a person is thinking of.

Paralyzed volunteers have been asked to visualize making hand movements in earlier research. Implants have enabled them to play video games, drive a cursor around a screen, select letters on a virtual keyboard, control a robotic arm, and more by “decoding” their neurological impulses in real-time.

The Stanford team’s latest study sought to determine whether neurons in the motor cortex also held important information on speaking movements. They wanted to know if they could tell when “subject T12” was trying to speak by moving her mouth, tongue, and vocal cords.

These are little, imperceptible motions, and according to Sabes, one significant finding was that a small number of neurons held just enough data to enable a computer program to guess the words the patient was trying to utter accurately. Shenoy’s team transferred the information to a computer screen where the words spoken by the patient’s voice-activated computer appeared.

The new finding relies on Edward Chang’s earlier research from the University of California, San Francisco, who claimed that speech is one of the most difficult activities a person can perform. With the help of our mouth, lips, and tongue, we force air out, add vibrations that make it audible and shape it into words. One of the numerous mouth movements required to talk, pressing the air out while placing your upper teeth on your lower lip, produces the sound “f.”

A Path Forward: Chang previously allowed a volunteer to talk through a computer by implanting electrodes on the top of the brain, but the Stanford researchers claim that their method is more precise and three to four times faster in their preprint.

The researchers, which included Shenoy and neurosurgeon Jaimie Henderson, concluded that their findings “suggest a possible road forward to restore speech to persons with paralysis at conversational speeds.”

At UCSF, David Moses, a member of Chang’s team, claims that the most recent study sets “amazing new performance benchmarks.” However, he adds, “it will become increasingly critical to demonstrate robust and reliable performance across multi-year time frames,” even as records are still being broken. Any commercial brain implant might find it challenging to get past authorities, especially if it deteriorates over time or the recording’s accuracy declines.

Future developments are probably going to involve both more advanced implants and closer AI integration.

The current system already makes use of a few different kinds of machine learning software. The Stanford researchers used software that anticipates what word will generally come after another in a phrase to increase its accuracy. Even though both words seem similar and could result in comparable patterns in someone’s brain, “I” is more frequently followed by “am” than “ham.”

The word prediction system boosted the subject’s ability to talk more swiftly and accurately.

Language Models: However, more recent “big” language models, such as GPT-3, are able to complete essays and respond to inquiries. Due to the system’s improved ability to infer what users are trying to say from incomplete information, connecting these to brain interfaces may allow users to speak even faster. Because you might not require such outstanding input to get speech out, Sabes speculates that a voice prosthesis is imminent in light of the recent success of big language models.

Shenoy’s team is a member of the BrainGate consortium, which has implanted electrodes in the brains of more than a dozen participants.

They employ an implant known as the Utah Array, a hard metal square with roughly 100 electrodes that resemble needles.

Neuralink, Elon Musk’s brain interface business, and Paradromics, a startup, claim to have created more advanced interfaces that can simultaneously record from thousands or perhaps tens of thousands of neurons.

The latest study implies that measuring from many neurons at once will have an impact, especially if the task is to read complicated actions like a speech from the brain. Some doubters have questioned whether this will have any effect.

The Stanford researchers discovered that deciphering “T12’s” intended message was easier when reading from many neurons at once.

“This is a major deal,” says Sabes, a former senior scientist at Neuralink, “because it shows that efforts by firms like Neuralink to put 1,000 electrodes into the brain will matter if the task is sufficiently rich.”