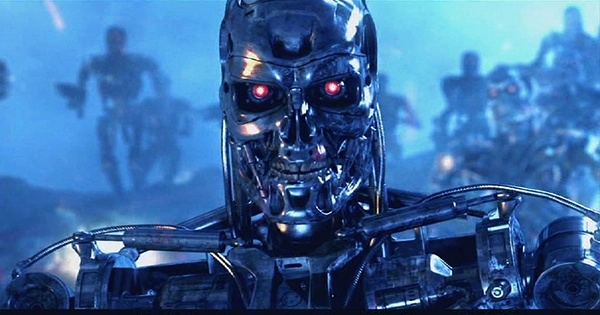

In the 1984 film Terminator, Skynet, an artificially intelligent (AI) defensive network, becomes self-aware and launches a worldwide nuclear war to wipe humanity. The film makes use of two of humanity’s greatest worries at the time: nuclear apocalypse and a time-traveling Arnold Schwarzenegger robot. However, our anxieties and notions about artificial intelligence have evolved over the previous (oh god) 37 years, and the director of Terminator has provided an update on how he believes Skynet may take over the globe without needing nuclear weapons at all.

In an interview with BBC News, James Cameron discussed how Skynet might instead employ fake news and deep fakes to convince humankind to kill itself. “It would actually look a lot like what’s going on right now if Skynet were to take over and wipe us out,” Cameron stated in the interview. “It won’t take nuclear weapons to wipe out the entire biosphere and environment; it will be much easier and cost far less energy to just turn our minds against ourselves.”

Deepfakes, according to the director, might use to create stress and cause damage before the news cycle has had time to disprove whatever the video is. He has worked with complex special effects in films such as Avatar. “All Skynet would have to do is deepfake a number of people, put them against each other, build up a lot of animosity, and then run this massive deepfake on humanity,” says the author.

Cameron believes that the technology is not yet good enough to fool the world – or at least not enough of it – but that this will change with time. When it occurs, he believes that people will be too trusting of what they see in the media to protect themselves. When AI does arise, the filmmaker is also concerned about who will be in charge. “I’m always wary of new technologies, as should we all be, every technological advancement that ever been made has become weaponized. When I tell AI experts this, they always answer, “No, no, we’ve got this under control.” We simply set the correct goals for the AIs,’ “He went on to explain.

“So, who decides what those objectives are, aren’t these the folks that put up the money for research, all either defense or large business? So you’re either going to teach these new sentient creatures to be selfish or murderous?” “I mean, I might be a projection of an AI right now,” he concluded, adding unnecessary uncertainty to the interview.