Engineers at Caltech, ETH Zurich, and Harvard are working on artificial intelligence (AI) that will allow autonomous drones to navigate by using ocean currents rather than fighting their way through them.

“When we want robots to explore the deep ocean, especially in swarms, it’s nearly impossible to control them with a joystick from the surface, which is 20,000 feet away. We also can’t provide them with information about the local ocean currents they need to navigate because we can’t detect them from the surface. Instead, at some point, we’ll need ocean-borne drones to be able to make their own decisions about how to move” says John O. Dabiri (MS ’03, PhD ’05), Centennial Professor of Aeronautics and Mechanical Engineering and corresponding author of a Nature Communications paper on the research.

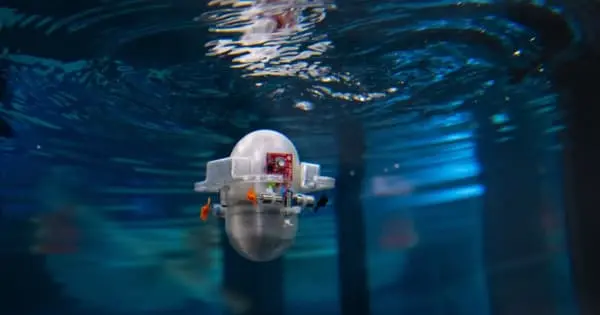

The AI’s performance was evaluated using computer simulations, but the team behind the project also created a small palm-sized robot that runs the algorithm on a tiny computer chip that could power seaborne drones on Earth and other planets. The goal would be to develop an autonomous system to monitor the state of the world’s oceans, for example, by combining the algorithm with prosthetics previously developed to help jellyfish swim faster and on command. Fully mechanical robots powered by the algorithm could even explore the oceans of other planets like Enceladus or Europa.

When we want robots to explore the deep ocean, especially in swarms, it’s nearly impossible to control them with a joystick from the surface, which is 20,000 feet away. We also can’t provide them with information about the local ocean currents they need to navigate because we can’t detect them from the surface.

John O. Dabiri

Drones would need to be able to make decisions on their own about where to go and the most efficient way to get there in either scenario. To do so, they will most likely only have data that they can collect themselves, such as information about the current water currents.

To address this issue, researchers used reinforcement learning (RL) networks. Reinforcement learning networks, unlike traditional neural networks, do not train on a static data set but rather train as quickly as they can accumulate experience. This scheme enables them to exist on much smaller computers; for the purposes of this project, the team wrote software that can be installed and run on a Teensy — a 2.4-by-0.7-inch microcontroller that can be purchased for less than $30 on Amazon and consumes less than a half watt of power.

The team taught the AI to navigate in such a way that it took advantage of low-velocity regions in the wake of the vortices to coast to the target location with minimal power used, using a computer simulation in which flow past an obstacle in water created several vortices moving in opposite directions. The simulated swimmer only had access to information about the water currents in its immediate vicinity to aid navigation, but it quickly learned how to use the vortices to coast toward the desired target. In a physical robot, the AI would similarly only have access to information gathered from an onboard gyroscope and accelerometer, both of which are small and inexpensive sensors for a robotic platform.

This type of navigation is similar to how eagles and hawks use thermals in the air to maneuver to a desired location with the least amount of energy expended. Surprisingly, the researchers discovered that their reinforcement learning algorithm could learn navigation strategies that were even more effective than those previously thought to be used by real fish in the ocean.

“We were hoping that the AI would be able to compete with navigation strategies already found in real swimming animals, so we were surprised to see it learn even more effective methods by utilizing repeated trials on the computer,” says Dabiri.

The technology is still in its infancy: the team would like to test the AI on each different type of flow disturbance it might encounter on an ocean mission, such as swirling vortices versus streaming tidal currents, to determine its effectiveness in the wild. The researchers hope to overcome this limitation by incorporating their knowledge of ocean-flow physics into the reinforcement learning strategy.

The current study demonstrates the potential effectiveness of RL networks in addressing this challenge, especially given their ability to operate on such small devices. The Teensy will be mounted on a custom-built drone called the “CARL-Bot” to test this in the field (Caltech Autonomous Reinforcement Learning Robot). The CARL-Bot will be dropped into a new two-story-tall water tank on Caltech’s campus and taught to navigate ocean currents.

“Not only will the robot learn, but we’ll learn about ocean currents and how to navigate through them,” says Peter Gunnarson, a graduate student at Caltech and the paper’s lead author.